Technology of the future: Robots use foresight to imagine actions (VIDEO)

Published 5 Dec, 2017 15:39 | Updated 6 Dec, 2017 07:33

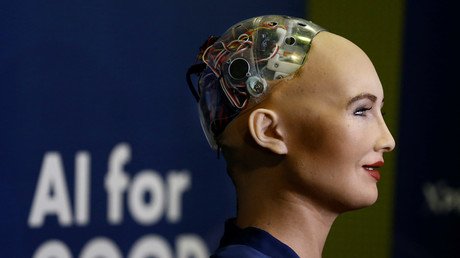

Scientists have developed new technology that allows robots to imagine their future actions, enabling them to maneuver objects they have they have never encountered before – all without human assistance.

Using a technology called ‘visual foresight,’ robots can imagine what their camera’s will see if they move objects in a certain way. Though the robot, called ‘Vestri,’ currently can only use its powers of foresight to imagine a few seconds into the future, it is thought that the technology will one day be used in self-driving cars and produce more intelligent home assistants.

Researchers at UC Berkeley in California are at the forefront of this breakthrough, which allows the robots to learn how to perform these tasks without any prior knowledge about the objects, physics or its environment. Vestri’s visual imagination is learned entirely from scratch. Much like a child, the machine simply plays with the objects. In fact, the researchers took inspiration from what they call babies’ “motor babbling,” programing that kind of learning into the robot. When playtime is over, it builds a predictive model of the world and then uses it to manipulate objects, and all without the help of mom and dad.

Using its cameras, the machine produces a variety of scenarios which haven’t yet happened, it imagines what will happen, and then chooses the most effective way of moving an object from one place to another.

“In the same way that we can imagine how our actions will move the objects in our environment, this method can enable a robot to visualize how different behaviors will affect the world around it,” Sergey Levine, an assistant professor at Berkley, said in a university press release. “This can enable intelligent planning of highly flexible skills in complex real-world situations,” he added.

The method relies solely on autonomously collected information, unlike conventional computer-vision methods in which thousands or even millions of images must be painstakingly labeled and programed into a machine. For this reason, researchers say the technology is “general and broadly applicable.”

The team will demonstrate Vestri’s abilities at the Neural Information Processing Systems conference in Long Beach, California, on December 5.