MIT scientists eavesdrop using a bag of chips, plant leaves

Published 5 Aug, 2014 01:44 | Updated 5 Aug, 2014 01:29

James Bond used an electric razor as an eavesdropping device. Lucy Ricardo (and many others) used a glass of water. But now MIT researchers say they can photograph a bag of potato chips to listen in on other’s conversations.

Computer scientists and engineers at the Massachusetts Institute of Technology joined with colleagues at Microsoft Research and Adobe Research to create an algorithm that reconstructs audio signals from everyday items like a bag of chips, aluminum foil or the leaves of a potted plant.

“When sound hits an object, it causes the object to vibrate,” Abe Davis, a graduate student in electrical engineering and computer science at MIT and first author on the new paper, said in a statement by the school. “The motion of this vibration creates a very subtle visual signal that’s usually invisible to the naked eye. People didn’t realize that this information was there.”

Davis, joined by Frédo Durand and Bill Freeman, both MIT professors of computer science and engineering; Neal Wadhwa, a graduate student in Freeman’s group; Michael Rubinstein of Microsoft Research, who did his PhD with Freeman; and Gautham Mysore of Adobe Research, then used video to capture those subtle video signals. The group will present their paper, ‘The Visual Microphone: Passive Recovery of Sound from Video’, at this year’s Siggraph, the premier computer graphics conference, MIT said.

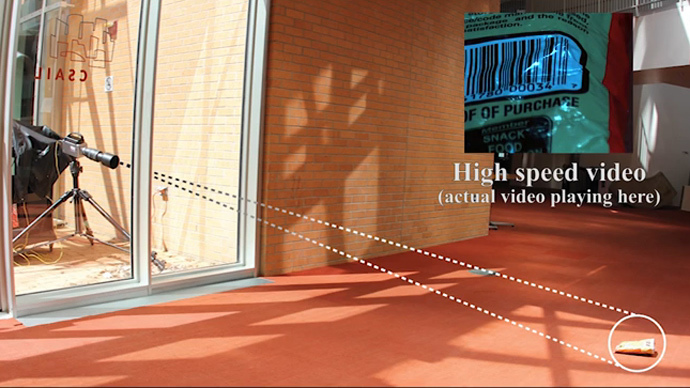

The researchers used only a high-speed video to extract the vibrations caused by sound hitting an everyday object and to partially recover the sound that produced them, turning those objects into “visual microphones,” they wrote in their abstract.

Reconstructing audio from video requires that the frequency — the number of frames of video captured per second — of the video be higher than the frequency of the audio signal, so the group used cameras that capture between 2,000 and 6,000 frames per second (fps), or about 33 to 100 times faster than the 60 fps possible with some smartphones, the statement said. Television in the US broadcasts at a rate of 30 fps, while film was historically 24 fps. The best commercial cameras, however, can capture over 100,000 frames per second.

During some of the experiments the group conducted for their paper, they were able to use ordinary digital cameras “because of a quirk in the design of most cameras’ sensors,” the statement said. That kink allowed the researchers to infer information without actually recording it at 60 fps.

“This audio reconstruction wasn’t as faithful as it was with the high-speed camera,” MIT said, but “it may still be good enough to identify the gender of a speaker in a room; the number of speakers; and even, given accurate enough information about the acoustic properties of speakers’ voices, their identities.”

The idea of an optical microphone has been used in science fiction before. “In the extremely mediocre season 7 X-Files episode ‘Hollywood A.D.’, Mulder and Scully were able to recreate Jesus' voice from the imprint it had made on some clay,” Vice’s Motherboard wrote. “That was the X-Files. This is real.”

The technique the engineers used is likely to have applications in the law enforcement and forensics fields, where eavesdropping is already employed. Motherboard wonders, though, how useful a visual microphone would really be: “It's not clear exactly when you're going to have the unfettered ability to use a camera, but not a microphone to spy on someone,” they wrote.

Davis has other ideas for its use, though. “We’re recovering sounds from objects,” he says. “That gives us a lot of information about the sound that’s going on around the object, but it also gives us a lot of information about the object itself, because different objects are going to respond to sound in different ways.”

He calls these potential uses a “new kind of imaging.” In that vein, the researchers have begun trying to determine material and structural properties of objects from their visible response to short bursts of sound.