'Just like humans': Robot simulation can move, interact without instruction

Artificial intelligence technologies are advancing yet again, this time creating a robot simulation that “works just like humans.” It can move and interact with other robots without any instructions.

The simulation – created by Georg Martius from the Institute for Science and Technology (IST Austria) and Ralf Der from Max Planck Institute for Mathematics in the Sciences in Leipzig – was developed as the two researchers were looking at how artificial neural networks can develop autonomous, self-directed behavior.

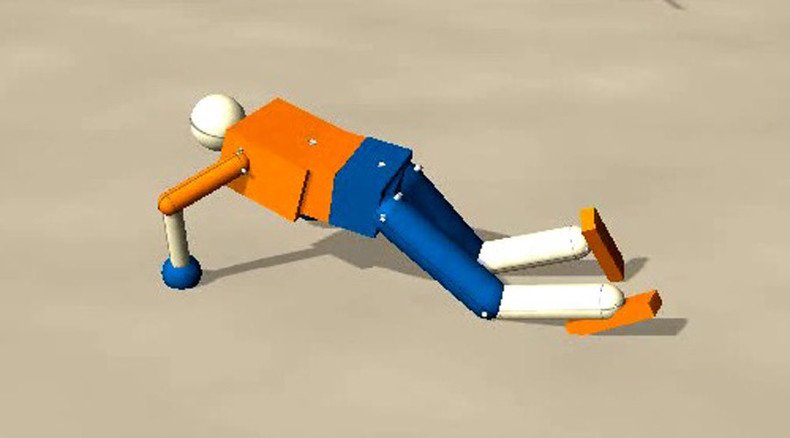

The researchers used “bioinspired” robots in physically realistic computer simulations. The robots received sensory input from their bodies, but were not given any instructions or tasks to complete.

‘We managed to crack mystery of brain neural system’: Siberian scientists step closer to AI http://t.co/3AZFLZ12Repic.twitter.com/W7uDR2G5nP

— RT (@RT_com) August 17, 2015They successfully developed sensorimotor intelligence, obtaining feedback from their surroundings to learn how to crawl, walk on changing surfaces, and cooperate with other robots. A video can be seen here.

“It works just like us humans,” Martius said, as quoted by the Local. “When we touch something, a signal is transmitted to the brain, processed, and converted into a muscle movement.”

The research, published in PNAS (Proceedings of the National Academy of Science), led the scientists to theorize that self-directed behavior can happen in the “synaptic plasticity” of the nervous system. Synaptic plasticity is described as a coupling mechanism that allows a simple neural network to generate constructive movements.

Stephen Hawking set to tackle dangerous AI & aliens in Reddit AMA http://t.co/NAkoWr28RKpic.twitter.com/r67krUSOLY

— RT (@RT_com) July 29, 2015Martius and Der suggested the results can help improve the understanding of evolution.

“It is commonly assumed that leaps in evolution require mutations in both the morphology and the nervous system, but the probability for both rare events to happen simultaneously is vanishingly low,” Martius said.

“But if evolution was indeed in line with our rule, it would only require bodily mutations - a much more productive strategy. Imagine an animal just evolving from water to land. Learning how to live on land during its own life time would be very beneficial for its survival.”